Published on Khalid SEO · Updated March 2026 · 12-min read

You checked your analytics in February 2026 and the numbers didn’t make sense. Traffic was down sometimes 40%, sometimes more. But your organic search rankings looked fine.

That’s not a fluke. That’s Google Discover making a decision about your content.

The problem: A standalone update the first of its kind hit the Google Discover feed between February 5 and 27, 2026. It didn’t touch organic search. It targeted Discover specifically, and it rewrote the rules for which content gets surfaced.

The agitation: Most articles covering this event repeat the same two paragraphs from Google’s blog. They tell you to “improve content quality” which is about as useful as telling someone with a flat tyre to “fix the car.”

The solution: This post, published by Khalid SEO, synthesizes data from DiscoverSnoop and NewzDash DiscoverPulse to show exactly which publisher types got hit, why each root cause triggered suppression, and what a structured recovery looks like step by step.

Key takeaways

- The February 2026 update affected only Google Discover not organic search rankings.

- Clickbait publishers lost 50–70% of Discover impressions. Local and niche publishers gained.

- The update added a quality gate: E-E-A-T and geographic relevance are now checked before impression delivery.

- Recovery takes 2–8 weeks and happens through new eligible content, not by editing old posts.

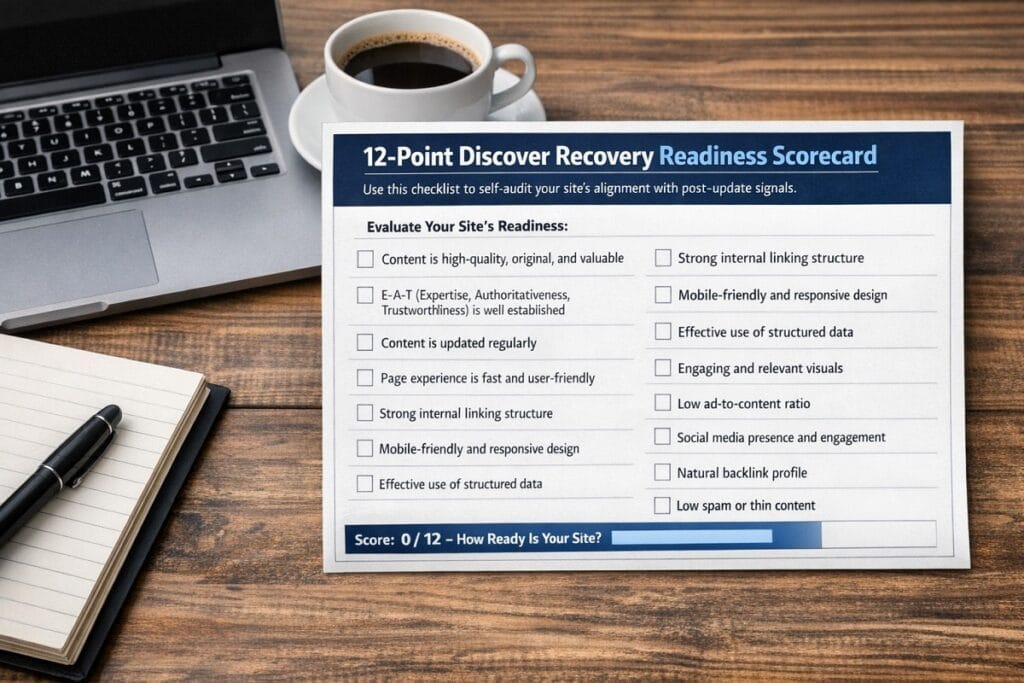

- A 12-point self-audit scorecard at the end of this post tells you exactly where you stand.

What was the February 2026 Discover Core Update?

The February 2026 Discover Core Update was the first-ever standalone Google update targeting only the Discover feed not organic search. It began rolling out on February 5, 2026, and completed around February 27 for US English users.

Unlike a standard core update, which adjusts how Google ranks pages across all its products, this update changed how Discover selects and serves content to users in their personalised feed.

Here’s what specifically changed:

- Quality gate added: Content now passes through an E-E-A-T check before Discover considers it eligible for distribution.

- Geographic relevance weighted: Locally relevant publishers gained preferential treatment in users’ national feeds.

- Clickbait signals penalised: Sensational or misleading headlines trigger suppression outright, regardless of engagement history.

- Topical depth required: Shallow content with low information density dropped out of eligibility.

- Original reporting rewarded: First-person analysis and cited sourcing became active positive signals.

Why this update is different from a standard Google core update

Standard core updates are broad. They adjust hundreds of signals across Search, Discover, and News simultaneously. This one was surgical.

Google confirmed it was Discover-specific meaning a site could see its Discover traffic collapse while its search rankings held completely steady. That separation is new. It tells us Google now manages Discover as an independent product with its own ranking logic.

The rollout timeline: key dates and what to watch for

The rollout began February 5 for US English users. Volatility peaked around February 10–14, based on DiscoverSnoop publisher tracking. By February 27, patterns had largely stabilised though some publishers reported continued fluctuation through early March.

If your traffic hasn’t recovered after four weeks of sustained content improvements, you’re likely looking at an ongoing eligibility issue, not a residual rollout effect.

Does a Discover traffic drop mean your search rankings dropped too?

No. A Discover traffic drop does not mean your search rankings changed. The two systems are now evaluated independently.

This is the most important distinction to make before you start making changes. Editing your top organic-ranking pages in response to a Discover penalty can actually damage your search performance a mistake many site owners made in February and March.

| How to verify: Open Google Search Console → Performance → click ‘Search type’ at the top → select Discover. Compare impressions and clicks from the Discover view versus the Search view. If your Search numbers held steady while Discover impressions fell sharply around February 5, the update — not a ranking issue — is responsible. |

This also means your SEO fundamentals are not broken. Your content is indexed, it ranks – it just isn’t being surfaced in the feed. That’s a distribution problem, not an authority problem. The fix is different.

Who won and who lost: publisher impact data

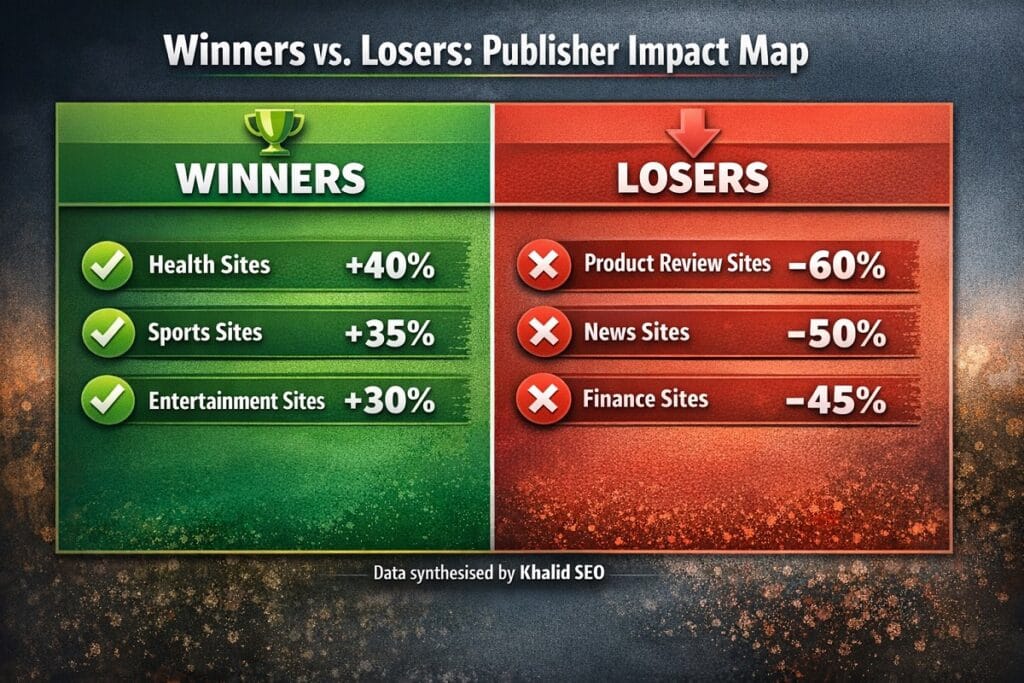

Based on data synthesised by Khalid SEO from DiscoverSnoop publisher-level tracking and NewzDash DiscoverPulse trend monitoring across the February–March 2026 period, the impact was sharply asymmetric.

Publishers that lost Discover traffic and by how much

| Publisher type | Estimated traffic impact | Primary cause |

| Large clickbait publishers (e.g. Yahoo News, Fox Business verticals) | −47% to −70% | Sensational headline patterns |

| YMYL sites without clear E-E-A-T signals | −35% to −55% | Weak author credentials, uncited claims |

| Non-US publishers targeting US audiences | −30% to −45% | Geographic mismatch signal |

| General news aggregators | −25% to −40% | Shallow topical coverage |

| Mid-size lifestyle blogs (celebrity, entertainment) | −20% to −35% | Mixed: clickbait + thin content |

Data synthesised by Khalid SEO from DiscoverSnoop and NewzDash DiscoverPulse, February–March 2026. Individual site results varied.

Surprising winners: why local and niche publishers gained

The same update that hurt large publishers lifted another segment entirely. Regional news sites, local lifestyle publications, and deep-niche specialist blogs reported Discover impression increases of 40–80% in the same period.

The pattern is consistent: Google appears to be rewarding publishers whose content has a clear topical identity and a natural geographic audience. A food blog covering a specific city’s restaurant scene gained. A national food blog aggregating trending recipes lost.

Why your Discover traffic dropped: the 5 root causes

Before you make a single change, you need to know which of these five causes is actually responsible. Applying the wrong fix wastes weeks.

Root cause 1: clickbait or sensational headlines

Discover has always been sensitive to headlines. This update made that sensitivity into a hard signal.

Clickbait markers include: exaggerated emotional language (“You won’t believe…”), withheld information (“The reason why is shocking”), fabricated urgency, and hyperbolic superlatives without evidence. If more than 15–20% of your recent Discover-eligible articles used these patterns, that’s almost certainly a contributing factor.

The fix is not about tone. It’s about specificity. Replace “The shocking truth about X” with “Why X happens and what to do about it.”

Root cause 2: weak E-E-A-T signals

E-E-A-T “Experience, Expertise, Authoritativeness, and Trustworthiness” is no longer just a Search concept. It now applies directly to Discover eligibility.

Missing signals include: no author byline, no author bio with relevant credentials, uncited factual claims, no editorial standards page, and no “About” content that establishes the site’s purpose and qualifications. Discover appears to weight these at the article level, not just the site level.

Root cause 3: YMYL content without verifiable expertise

Your Money or Your Life content “health, finance, legal, safety” faces a higher bar. Always.

If your site publishes medical advice without a qualified author, investment commentary without disclosed credentials, or legal information without attribution, Discover’s new quality gate flags it. YMYL sites that lost traffic in February were almost uniformly missing author credential verification at the content level.

Root cause 4: geographic mismatch

This one surprised many publishers. A non-US site producing English content and optimising for US audiences lost Discover visibility even when the content quality was high.

Google appears to have strengthened a “local relevance” filter a preference for surfacing content from publishers whose audience, focus, and operational geography align with the user’s location. This isn’t a ban on international content. It’s a tie-breaker and it’s now a heavier one.

Root cause 5: shallow topical coverage

Single-topic articles that don’t connect to a broader subject area performed poorly. Google Discover favours publishers with topical depth, meaning a coherent cluster of related articles around a subject, not isolated posts.

If your site publishes one article on a topic and moves on, Discover has less context to predict whether your content is worth surfacing for a given user. Specialist sites with 15–30 interconnected articles on a subject consistently outperformed generalists.

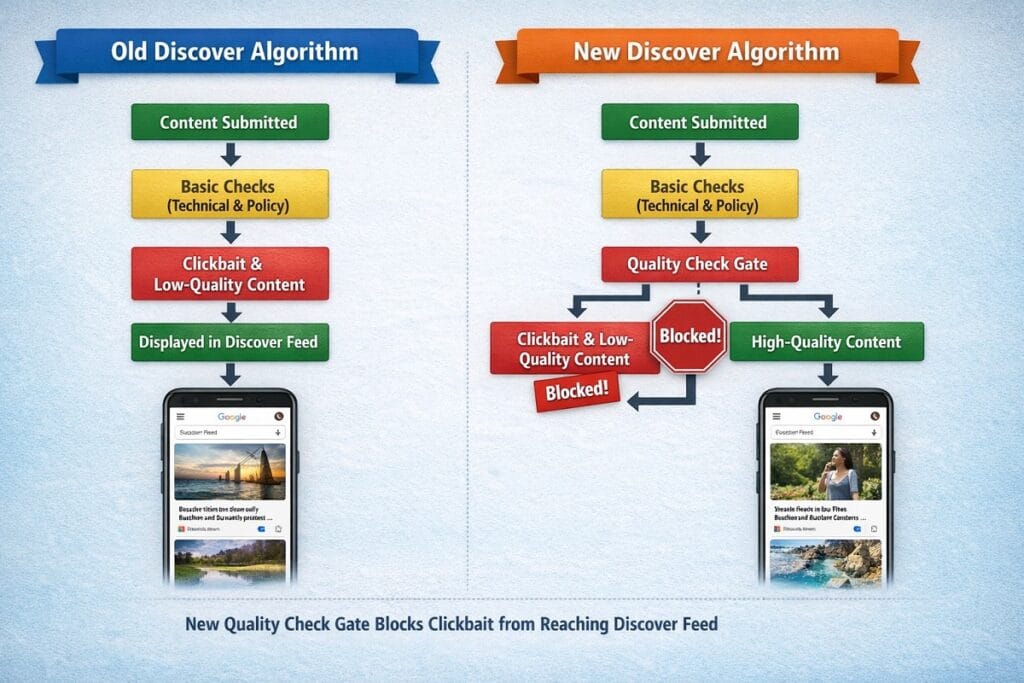

How the February 2026 update changed Discover’s ranking logic

Understanding the mechanism matters, not just for fixing the current problem, but for avoiding the next one.

Pre-update, Google Discover was primarily an engagement-optimised system. Content that received high click-through rates in the feed got shown to more users. That created a feedback loop: sensational headlines got more clicks, which triggered more distribution, which reinforced the headline strategy. Publishers exploited this deliberately.

The update broke that loop by inserting a quality evaluation stage that runs before impression delivery. Here’s the new flow:

| Old Discover logic (pre-Feb 2026) | New Discover logic (post-Feb 2026) |

| Content indexed → Discover eligibility assumed → CTR determines distribution | Content indexed → Quality gate (E-E-A-T + geographic relevance check) → If passes: CTR determines distribution |

| Clickbait worked: higher CTR = more impressions | Clickbait blocked: fails quality gate before it can accumulate CTR |

| Non-local content competed equally with local | Local relevance is now a weighted pre-filter |

| Topical depth: no explicit signal | Topical depth: positive eligibility signal |

| YMYL: same rules as Search | YMYL: stricter author credential check for Discover eligibility |

Producing quality content was never enough to guarantee Discover visibility. But under the old system, producing engaging content was often sufficient even if quality was marginal. That’s no longer true.

How to recover from the February 2026 update: a step-by-step action plan

Recovery comes from producing new eligible content, not from editing old articles. That’s the most important thing to understand about how Discover re-evaluates publishers.

Discover re-assesses your content on a rolling basis. Old articles that have already been declined eligibility don’t get re-evaluated unless they’re substantially updated. Your fastest path to recovery is new content that signals the right factors from day one.

Step 1: diagnose your Discover exposure in Search Console

- Open Search Console → Performance → Search type: Discover.

- Filter by date: compare Feb 1–5 (pre-update) to Feb 10–27 (during rollout).

- Identify which specific pages lost impressions, not just overall traffic.

- Check whether those pages share characteristics: topic type, headline style, author attribution.

This diagnosis determines which root cause applies to your site. Don’t skip it.

Step 2: audit headlines for clickbait patterns

Export your top 20 Discover pages from the pre-update period. Score each headline against these criteria:

- Withheld information: “You won’t believe…” / “The reason is…” → Flag

- Fabricated urgency: “Right now” / “Before it’s too late” with no actual time sensitivity → Flag

- Exaggerated superlatives: “The best ever” / “Shocking” / “Incredible” without evidence → Flag

- Passes: Specific, descriptive, truthful headlines that match article content → Keep

Rewrite flagged headlines. Specific beats vague. Descriptive beats emotional. The headline should tell the reader exactly what they’ll get, not make them curious enough to click.

Step 3: add E-E-A-T signals at content and site level

At the article level:

- Add a named author byline to every piece.

- Link the author name to a bio page listing relevant experience, credentials, or publications.

- Cite external sources for factual claims, not just for SEO, but for trust signals.

- Add a “Last reviewed” or “Updated” date where content ages.

At the site level:

- Ensure your About page explains the site’s purpose, editorial standards, and who runs it.

- If YMYL topics are covered, add explicit author credential information, not just a name.

Step 4: build topical depth — publish vs. prune

Not every old article needs attention. Use this decision framework:

- High traffic / strong E-E-A-T: Leave it. It’s working.

- Low traffic / thin content / no author: Either update substantially (add 400+ words of original analysis, add author) or consolidate into a stronger piece.

- Clickbait headline / decent content: Update the headline only. The body content may still be eligible.

- Outdated / factually weak / no clear purpose: Prune — remove from index or redirect.

Your goal is a content library where every indexed article could plausibly pass a Discover quality gate, not just your newest ones.

Step 5: strengthen geographic relevance signals

If geographic mismatch contributed to your drop, the signals to fix are:

- Make your site’s primary audience explicit in your About page, your author bios, and your content focus.

- If you’re a non-US publisher targeting US audiences, consider whether a separate US-focused content cluster makes sense, or whether pivoting to your natural geographic audience delivers better long-term Discover performance.

- Local publishers: lean into it. Your competitive advantage just got larger.

How long does recovery take and what does it look like?

Recovery typically takes 2 to 8 weeks after you begin producing eligible content. The range is wide because it depends on how much new content you’re publishing and how cleanly it signals the updated ranking factors.

Three factors affect speed:

- Publishing volume: Sites publishing 3–5 new articles per week recovered faster than those publishing one.

- Signal clarity: Articles that strongly signalled all five eligibility factors (author credentials present, no clickbait, geographic relevance, topical depth, original insight) recovered faster than partially compliant content.

- Existing library quality: Sites that pruned weak content alongside publishing new content recovered faster, because a cleaner library improves overall site-level quality signals.

What partial recovery looks like in Search Console: Discover impressions begin recovering on newer articles before older ones. You’ll see a gap new content performing, old content still flat. That’s normal and expected. Full recovery means the older content either returns to eligibility or you’ve replaced its traffic with stronger new pieces.

Future-proofing your Discover strategy in 2026 and beyond

The publishers who will perform best in Discover over the next 12 months are not those who optimise for Discover specifically. They’re the ones building content that is genuinely good: specific, authored, locally relevant where applicable, and embedded in a topic area where their site has real depth.

That’s not a new idea. But the February 2026 update made it a technical requirement rather than a best-practice suggestion.

Three structural changes worth making now:

- Specialise, don’t generalise. A 40-article cluster on one sub-topic outperforms 40 scattered articles on 40 topics for Discover purposes. Choose your 2–3 core subject areas and build depth there.

- Make every author a real person. The days of “Staff Writer” bylines being acceptable are over, at least for Discover eligibility. Build author identity into your editorial workflow.

- Treat Discover as a distribution channel with its own rules, not as a byproduct of good SEO. Monitor your Discover report monthly. Treat Discover impression data the way you treat keyword rank data.

For deeper strategy resources, visit Khalid SEO for our full guides on E-E-A-T auditing, local content strategy for Discover, and topical authority building, all updated post-February 2026.

FAQ

What was the February 2026 Google Discover Core Update?

Prior to this update, Discover operated primarily on engagement signals, click-through rate in the feed was the primary distribution driver. The update inserted a quality evaluation stage before impression delivery, fundamentally changing which content types can reach the feed at all. Publishers who relied on clickbait patterns to generate feed impressions were disproportionately affected.

Why did my Google Discover traffic suddenly drop in February 2026?

Your Discover traffic dropped because the update changed eligibility criteria, likely due to one or more of: clickbait headlines, weak E-E-A-T signals, YMYL content without verifiable credentials, geographic mismatch, or shallow topical coverage.

The most reliable way to diagnose which cause applies to your site is to use Search Console’s Discover report to identify which specific pages lost impressions, then audit those pages against the five root causes outlined in this post. A blanket “improve content quality” approach without diagnosing the specific cause usually produces slow or no results.

Does a Google Discover traffic drop mean my search rankings also dropped?

No. A Discover traffic drop does not indicate any change to your organic search rankings. Google Search and Google Discover now operate as separate systems with distinct eligibility and ranking logic.

You can verify this by comparing the Search and Discover views in Search Console’s Performance report. If your Search impressions and clicks are stable while Discover impressions fell sharply around February 5, 2026, only your Discover eligibility was affected. Avoid making large-scale changes to pages that rank well organically in response to a Discover-specific issue.

How long does it take to recover from the February 2026 Discover update?

Recovery typically takes 2 to 8 weeks from when you begin consistently publishing eligible content — meaning content with strong E-E-A-T signals, non-clickbait headlines, and topical depth.

Recovery does not come from editing old articles, it comes from new content that signals the updated factors from publication. Sites publishing 3–5 compliant articles per week recovered faster than those publishing one per week. Pairing new content with a light pruning of the weakest old content accelerates the timeline further.

Which types of websites were most affected by the February 2026 Discover update?

The hardest-hit sites were large clickbait publishers (−47% to −70%), YMYL sites without verifiable E-E-A-T (−35% to −55%), non-US publishers targeting US audiences (−30% to −45%), and general news aggregators (−25% to −40%).

The clearest winners were local and regional publishers, sites with a defined geographic audience and topical identity. Deep-niche specialist blogs with strong author credentials also gained visibility. The update essentially rewarded publishers who had been doing the right things all along and penalised those who had been gaming engagement signals through sensational content.

Published by Khalid SEO— strategic SEO insights for publishers, content marketers, and digital growth professionals. All data synthesised from DiscoverSnoop and NewzDash DiscoverPulse, February–March 2026.

Hi, I am Khalid. I am an SEO and AI Search Specialist.

My goal is simple: I help your business get found by the right people.

For a long time, getting found just meant showing up on the first page of regular Google search. Today, the internet is changing. People are asking their questions to AI tools like ChatGPT and Google’s new AI features.

My job is to connect the old way of searching with the new way. When a potential customer asks an AI a question about what you do, I make sure your business is the trusted answer they get.

I do not use confusing words or secret tricks. I use clear and honest plans to get you noticed and bring real buyers straight to your website.

Want to see how I can make your brand the top answer? Connect with me on social media or read my exact steps at khalidseo.com.