Your content ranks on page one of Google. But when someone asks ChatGPT, Gemini, or Perplexity the same question, your site doesn’t appear. Not even close.

That gap is growing. Fast.

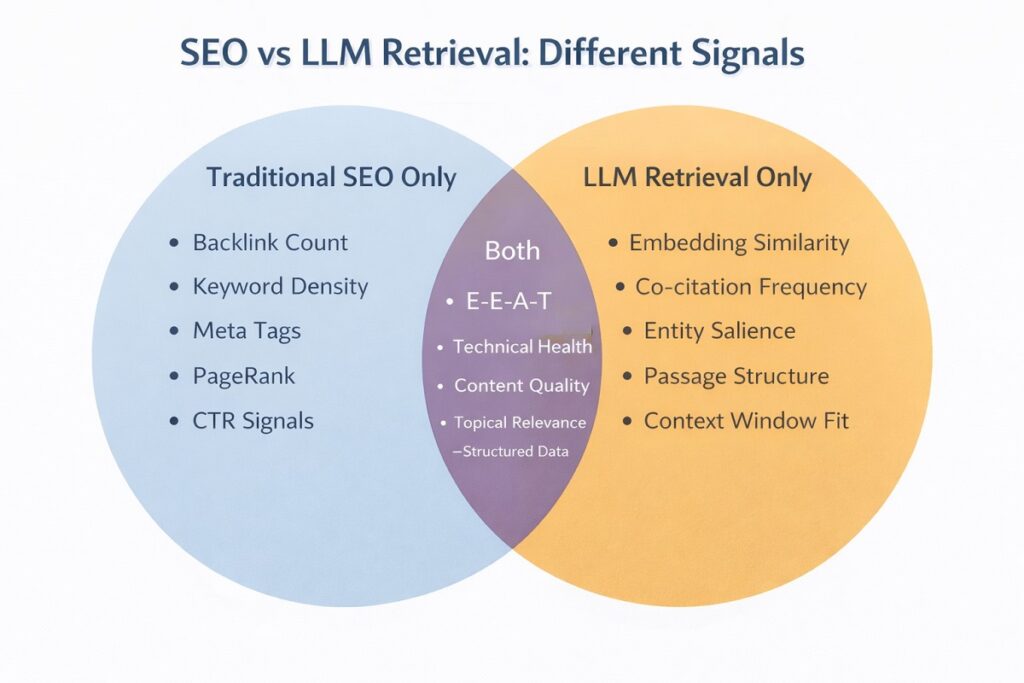

Traditional SEO and LLM retrieval use fundamentally different logic. One ranks pages. The other selects passages. One rewards backlinks. The other rewards entity authority and semantic depth. If you’re still optimizing exclusively for Google’s algorithm, you’re invisible to a rapidly expanding share of how people find information.

This guide fixes that. You’ll get the exact ranking factors that determine LLM retrieval, a clear map of where each factor applies in the retrieval pipeline, and a practical audit framework you can use on your existing content today.

The strategies covered here are part of the LLMO (Large Language Model Optimization) framework developed and applied at Khalid SEO, built specifically for SEOs who already know traditional ranking but need to bridge into AI-era visibility.

What Is LLM Retrieval and Why Does It Demand a Different Strategy?

Google ranks documents. LLMs retrieve answers.

That one distinction changes almost everything about how you should optimize content.

When a user types a query into ChatGPT or Perplexity, the system doesn’t crawl the web in real time and rank pages by domain authority. Instead, it matches the query against a vast vector space of embedded content selecting the passages most semantically similar to the query’s intent.

Understanding that pipeline is the first step to optimizing for it.

How Traditional Search Engines Rank Content

The traditional model is familiar: Googlebot crawls your page, the indexer stores it, and the ranking algorithm scores it against hundreds of signals – backlinks, keyword relevance, page experience, E-E-A-T.

The unit of ranking is the URL.

Your job in traditional SEO is to make a page rank for a keyword. Success is measured by position in the SERP.

How LLMs Retrieve Content Differently

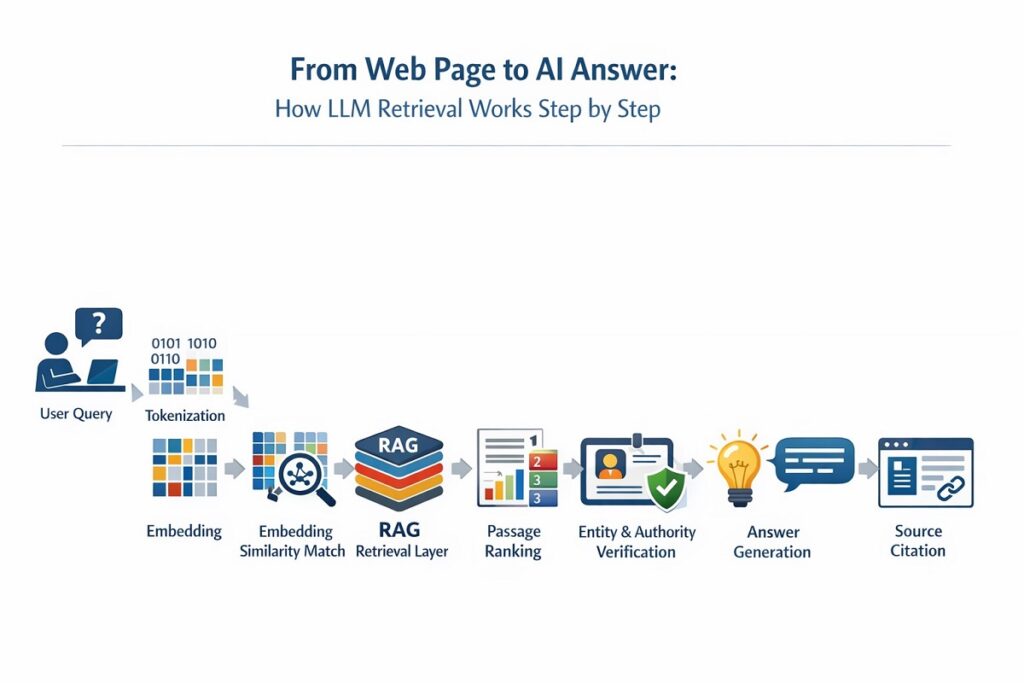

LLMs operate on a different layer entirely. The process, broadly, works like this:

- The user’s query is tokenized and converted into a mathematical vector

- That vector is matched against an index of pre-embedded content chunks

- The most semantically similar passages are retrieved

- The LLM synthesizes those passages into a final answer with or without citing the source

The unit of retrieval is the passage, not the page.

This is where RAG (Retrieval-Augmented Generation) architecture becomes critical. RAG allows LLMs to pull real-time or indexed external content into their responses. If your content is structured for passage-level retrieval, it can be selected. If it isn’t, it won’t be regardless of your domain authority.

Key concepts driving LLM retrieval:

- Embedding similarity — how closely your content’s vector matches the query vector

- Semantic chunking — how your content is broken into retrievable segments

- Token relevance — how precisely your language aligns with the query’s meaning

- Dense retrieval — the mechanism for finding semantically relevant content beyond exact keyword match

Why Your Top-Ranking Google Page May Be Invisible to AI

Here’s the uncomfortable truth: a page can rank #1 on Google and score zero retrievals from LLMs.

Why? Because Google rewards the page for authority signals. LLMs reward specific passages for semantic precision and factual grounding.

A 3,000-word page stuffed with keywords but lacking clear entity definitions, structured formatting, or direct-answer passages is effectively opaque to most retrieval systems.

Ranking ≠ Retrieval. That distinction is the core problem this guide solves.

The 7 Core Ranking Factors for LLM Retrieval

The key ranking factors for LLM retrieval are:

1) Topical Authority,

2) Entity Salience,

3) Semantic Relevance,

4) Structured Data & Schema Markup,

5) E-E-A-T Signals,

6) Co-Citation & Brand Mentions, and

7) Content Freshness & Factual Grounding.

Each factor influences a different stage of the RAG retrieval pipeline. Below is a breakdown of each – what it means, why it matters, and what to do about it.

Factor 1 — Topical Authority & Semantic Depth

LLMs are trained on vast corpora of text. They develop an implicit sense of which sources thoroughly cover a topic versus which sources merely mention it.

Topical authority is your content’s ability to signal comprehensive, interconnected coverage of a subject, not just a single page, but an entire semantic cluster.

What this looks like in practice:

- A hub-and-spoke content architecture where a pillar page links to supporting articles covering every relevant subtopic

- Consistent use of co-occurrence terms — the words and concepts statistically expected to appear alongside your primary topic

- Internal linking that reinforces the topical map across your domain

A site like Khalid SEO that publishes interconnected content on LLMO strategy, entity optimization, and semantic SEO collectively signals deeper authority than a site with a single isolated post on the same subject.

Factor 2 — Entity Salience & Knowledge Graph Alignment

Entity salience refers to how prominently and clearly your content defines its core subject.

LLMs parse content looking for named entities – people, organizations, concepts, products and use those entities to categorize and retrieve the content accurately. If your page is about RAG architecture but never explicitly defines or names that entity clearly, the LLM has weaker confidence in what your content covers.

Practical actions:

- Define your primary entity within the first 100 words of any content

- Use the entity’s full name and common variations (e.g., “Retrieval-Augmented Generation (RAG)”)

- Reference related entities from the Knowledge Graph — organizations, tools, and concepts that co-exist with your primary subject

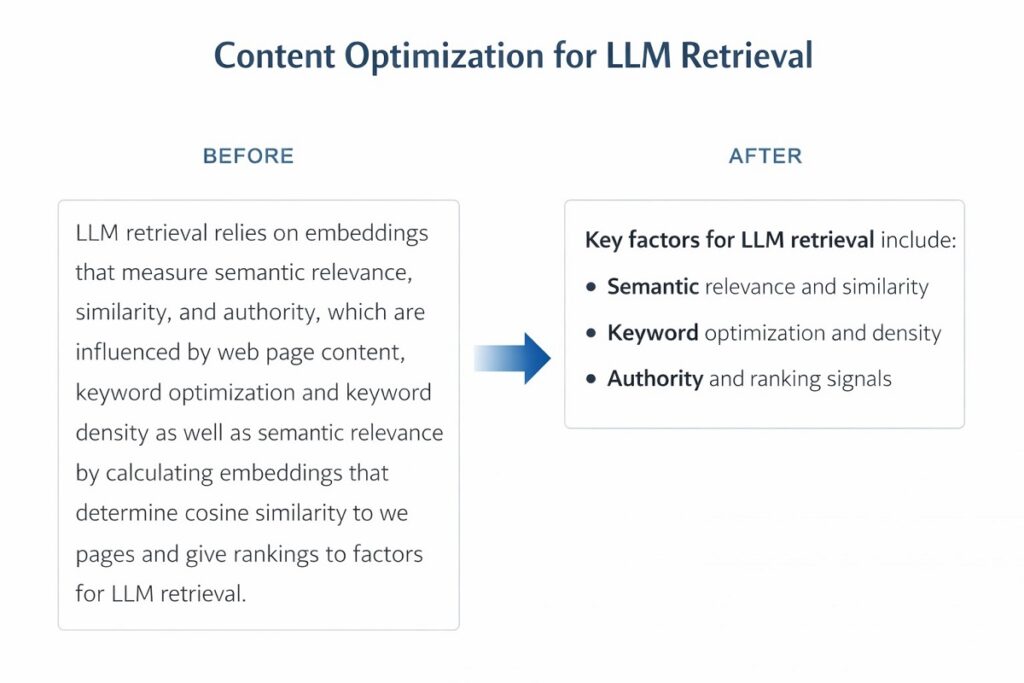

Factor 3 — Semantic Relevance & Embedding Proximity

This is where traditional keyword thinking breaks down.

LLMs don’t match keywords. They match meaning. Your content is embedded as a high-dimensional vector, and retrieval selects the passages whose vectors are closest to the query’s vector.

To optimize for embedding proximity:

- Write content that naturally incorporates the semantic neighborhood of your topic, the co-occurrence terms identified in your keyword strategy

- Avoid excessive repetition of exact phrases; focus instead on contextual completeness

- Use varied, precise vocabulary that covers the topic’s conceptual edges, not just its center

Think of it this way: a document about LLM retrieval that also naturally discusses embeddings, RAG, passage ranking, and token relevance will occupy a richer position in vector space than one that repeats “LLM retrieval” fifty times.

Factor 4 — Structured Data & Schema Markup

Schema markup is how you explicitly tell an LLM what your content is, who created it, and what questions it answers.

Without structured data, a retrieval system must infer your content’s meaning. With it, you remove ambiguity entirely.

Highest-impact schema types for LLM retrieval:

| Schema Type | Primary Benefit for LLM Retrieval |

|---|---|

Article | Identifies content type, author, date — signals credibility |

FAQPage | Directly maps questions to answers for passage extraction |

HowTo | Structures step-by-step processes for ordered retrieval |

Organization | Establishes brand entity in the Knowledge Graph |

BreadcrumbList | Reinforces topical hierarchy and site architecture signals |

Person (Author) | Builds author entity — critical for E-E-A-T verification |

Implementing FAQPage schema alone can significantly increase the probability that your explicit Q&A blocks are extracted and cited verbatim in AI answers.

Factor 5 — E-E-A-T Signals & Author Authority

E-E-A-T is no longer just a Google quality framework. It is increasingly how LLMs evaluate source credibility when selecting which content to cite.

Experience. Expertise. Authoritativeness. Trustworthiness.

LLMs are trained on web data that includes signals of credibility – author bylines, institutional affiliations, citation patterns, and external references to a source. Content that demonstrably comes from a credible entity is more likely to be retrieved and cited.

What to implement:

- Author Schema with consistent name, credentials, and organization markup

- A clear, detailed author bio page with cross-references to published work

- Byline consistency across your domain and external publications

- Factual claims supported by links to primary, authoritative sources

Factor 6 — Co-Citation & Brand Mention Signals

Links matter less here than you might expect.

LLMs are trained on vast amounts of text where sources are discussed, referenced, and mentioned, not just hyperlinked. When authoritative sources in your niche regularly mention your brand or cite your work, LLMs absorb that as a trust signal during training and retrieval.

Co-citation occurs when two sources are mentioned together in the context of the same topic. If a leading marketing publication mentions both Ahrefs and your site in the context of keyword research, that co-citation elevates your entity’s perceived relevance.

Practical strategies:

- Pursue digital PR — get your content cited in high-authority editorial pieces

- Create original data and research that others naturally reference

- Monitor brand mentions using tools like Brand24 or Google Alerts and track citation growth as an LLMO KPI

Factor 7 — Content Freshness & Factual Grounding

LLMs have training cutoffs, but many are supplemented with real-time retrieval via RAG. In either case, content that is factually accurate, internally consistent, and regularly updated performs better in retrieval scoring.

Content decay is real. A once-authoritative post that now contains outdated statistics, deprecated tools, or superseded advice loses retrieval relevance over time.

Freshness actions:

- Add a visible last-updated timestamp to all content

- Conduct a quarterly content audit — flag posts with statistics older than 18 months

- Update internal links as your content cluster grows to maintain topical signal strength

How the RAG Pipeline Works And Where Each Factor Applies

Understanding where each ranking factor intervenes in the retrieval process allows you to prioritize your optimization efforts with precision rather than guesswork.

Stage 1 — Query Tokenization & Intent Parsing

When a user submits a query, the LLM tokenizes it – breaking it into units of meaning and parses the underlying intent.

Relevant factors: Semantic Relevance, Entity Salience

If your content uses the same conceptual vocabulary as common user queries, your embedding will score higher in the initial match. This is why entity clarity in your opening paragraphs is disproportionately impactful.

Stage 2 — Embedding & Vector Matching

Your content has already been embedded as a vector. The retrieval system computes the similarity between the query vector and your content’s vector.

Relevant factors: Topical Authority, Semantic Depth, LSI Coverage

The richer your semantic coverage the more co-occurrence terms, related entities, and contextual vocabulary you naturally include the stronger your vector representation. Shallow content produces weak, generic embeddings that score poorly against specific queries.

Stage 3 — Passage Retrieval & Ranking

The system doesn’t retrieve your whole page. It retrieves specific passages typically 100–300 word chunks that best answer the query.

Relevant factors: Structured Data, Content Freshness, Direct-Answer Formatting

Passages that open with a clear direct answer, use structured formatting, and contain fresh factual claims score higher than long, meandering paragraphs that bury the answer. This is where content formatting becomes a direct retrieval signal.

Stage 4 — Authority Verification & Citation Selection

Before generating the final answer, the LLM applies a credibility filter. Which of the retrieved passages comes from a source worth citing?

Relevant factors: E-E-A-T, Co-Citation Signals, Brand Authority

This is where your off-page authority work pays off. A passage from a source with strong entity presence, cross-web mentions, and established author credentials is selected over an equally relevant passage from an anonymous or low-authority source.

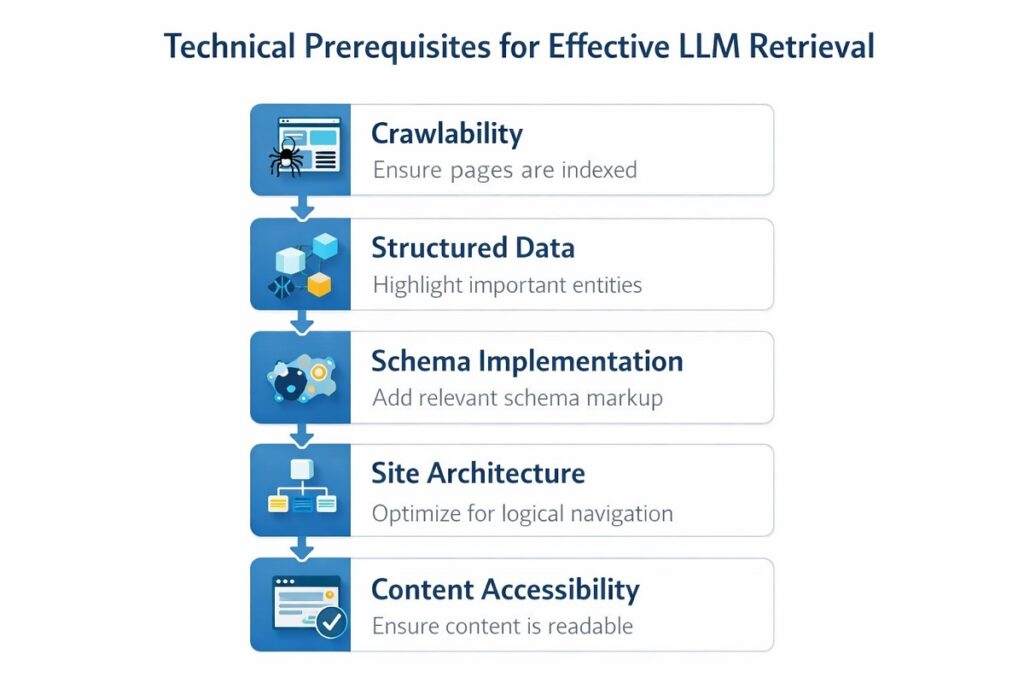

Technical SEO Foundations for LLM Retrieval Visibility

Before content quality or authority signals can matter, AI engines must be able to access and parse your content. Technical failures at this layer block everything downstream.

Ensuring AI Bot Crawl Accessibility

Several major LLMs deploy their own crawlers to index content for retrieval:

- GPTBot (OpenAI)

- Google-Extended (Google Gemini)

- PerplexityBot (Perplexity AI)

- ClaudeBot (Anthropic)

By default, some SEO practitioners block these bots to conserve crawl budget or out of data-sharing concerns. That’s a legitimate choice but it comes at a direct cost to LLM retrieval visibility.

Check your robots.txt now. If these bots are disallowed and you want AI visibility, update your crawl permissions.

Schema Markup Priorities for LLM Parsing

Refer to the schema priority table in the Factor 4 section above. Implementation priority order:

Article+Person(author) — foundational credibility signalsFAQPage— highest direct impact on passage extractionOrganization— entity establishment in Knowledge GraphHowTo— for instructional contentBreadcrumbList— topical hierarchy reinforcement

Site Architecture & Internal Linking for Topical Signals

A flat, disconnected site structure weakens topical authority signals for LLMs. Implement a hub-and-spoke model:

- One pillar page per core topic cluster

- Supporting articles each linking back to the pillar

- The pillar linking out to each supporting article

- Anchor text that uses semantic variations of the target entity not the same exact phrase every time

This structure doesn’t just help Google. It creates a web of semantic signals that reinforces your topical authority across the entire embedding space.

Content Formatting Strategies That Improve Passage Retrieval

Even perfectly researched content fails LLM retrieval if it’s formatted for human reading rather than machine parsing.

LLMs retrieve passages. Your job is to make your best passages findable, parseable, and self-contained.

Writing Direct-Answer Passages — The 40-Word Rule

Every major question your content addresses should have a 40–60 word direct-answer block placed immediately after the relevant heading.

This mirrors featured snippet optimization but operates at the passage level. The answer block should:

- Stand alone without requiring context from surrounding paragraphs

- Name the entity being addressed explicitly

- Use declarative, factual language — not hedged or conversational

Think of each H3 as a mini-answer card. The LLM should be able to lift it cleanly and drop it into a generated response without modification.

Header Hierarchy as a Semantic Map

Your H1 → H2 → H3 structure is not just visual organization. It is a semantic taxonomy that LLMs use to understand the relationship between concepts in your content.

A logical header hierarchy signals:

- What the page is primarily about (H1 entity)

- What sub-topics it covers (H2 clusters)

- What specific questions it answers (H3 passages)

Illogical or inconsistent header structures confuse both crawlers and embedding models. Keep it clean and hierarchically accurate.

Lists, Tables, and Structured Formats LLMs Prefer

Structured formatting isn’t just for readability. It directly improves semantic chunking, the process by which your content is broken into retrievable pieces.

LLM-preferred formats:

- Ordered lists for processes, steps, and ranked factors

- Tables for comparisons and attribute-value relationships

- Definition blocks for entity introductions

- Bold lead sentences that state the core claim before elaborating

Avoid long, unbroken paragraphs. They create semantic ambiguity, the retrieval system struggles to identify the precise claim worth extracting.

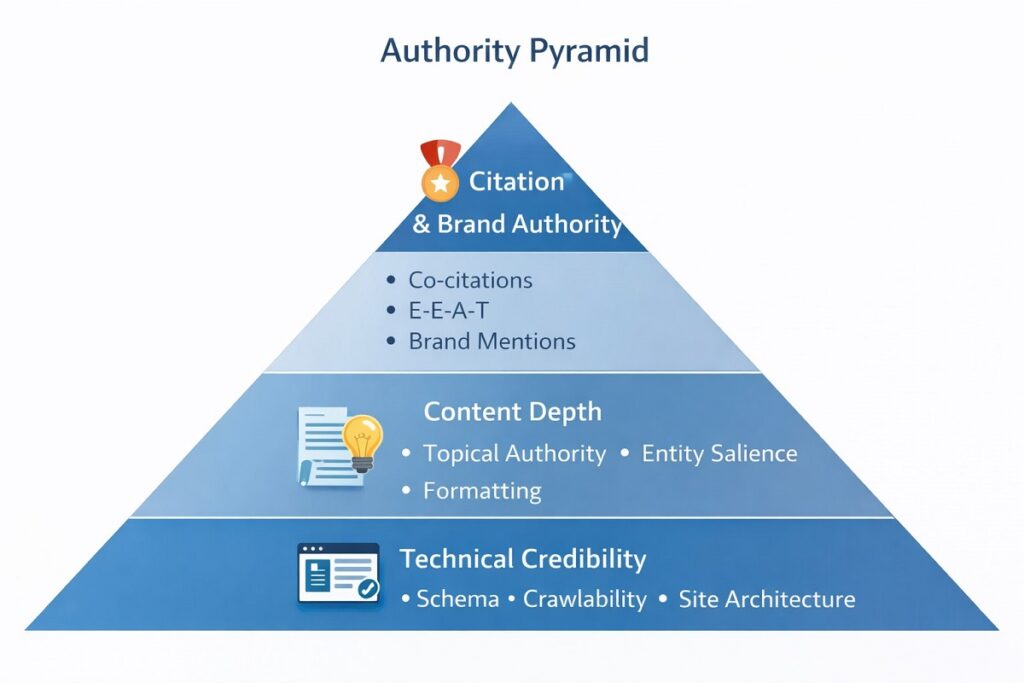

Building the Authority Signals LLMs Trust Most

Retrieval selects relevant passages. Citation selection favors authoritative sources. These are two different filters and you need to pass both.

Author Entity Optimization

Your author is an entity. LLMs can verify that entity against the Knowledge Graph, cross-referencing your author’s presence across publications, social platforms, and structured data.

Build your author entity footprint:

- Implement Person schema on your author bio page with consistent name, job title, and organization

- Maintain an active, complete LinkedIn profile — it is heavily indexed and frequently referenced in LLM training data

- Publish under a consistent byline across all external guest posts and media appearances

- Cross-link your author bio to your most authoritative published work

Earning Co-Citations from High-Authority Sources

Co-citations are the currency of LLM authority. The goal is to be mentioned alongside the established authorities in your space.

Tactics that work:

- Original research and data studies — journalists and bloggers naturally cite original data

- Expert quotes and commentary — position your principals as go-to voices for industry publications

- Collaborative content — round-up posts, expert panels, and co-authored pieces that place your brand in credible company

- PR-driven outreach — target editorial placements in publications that are heavily represented in LLM training data (major industry publications, academic-adjacent sites, established news outlets)

Brand Mention Monitoring & Amplification

Not every mention includes a link. For LLM retrieval purposes, unlinked mentions still carry signal weight.

Track and amplify mentions:

- Set up Google Alerts for your brand name, key contributors, and primary content titles

- Use tools like Brand24 or Mention to monitor citation volume and sentiment

- When you find unlinked mentions, reach out for a link but recognize the mention itself already contributes to your entity authority

At Khalid SEO, brand mention growth is tracked as a standalone LLMO KPI, separate from traditional link acquisition metrics.

The LLMO Readiness Audit — Score Your Content Right Now

Knowing the factors is one thing. Knowing which ones your specific content is failing on is another.

How to Use the Scorecard

The LLMO Readiness Scorecard below is a 15-point self-assessment. Each item scores 0–2 points. Your total determines your optimization priority level.

Score Interpretation:

- 🔴 0–10: Not AI-Visible — immediate structural changes required

- 🟡 11–20: Partially Optimized — key gaps exist; targeted fixes will yield fast gains

- 🟢 21–30: AI-Ready — maintain and refine; focus on authority amplification

The 3 Most Common LLMO Failure Points

Based on content audits conducted through Khalid SEO, three failure points appear consistently:

1. Missing entity definition in the opening Fix: Add a clear, explicit definition of your primary entity within the first 100 words. Don’t assume the reader (or the LLM) knows what you’re talking about.

2. No structured data implementation Fix: At minimum, implement Article, Person, and FAQPage schema. Use Google’s Rich Results Test to validate.

3. Shallow topical coverage — one page, no cluster Fix: Build out supporting content that addresses every major subtopic. A single post, however well-written, cannot signal topical authority alone.

Traditional SEO vs. LLMO — Where to Focus Your Strategy

You don’t have to choose between them. But you do need to understand where they diverge, so you can allocate effort intelligently.

Signals That Overlap — Do Both Well

The foundation is shared. Content quality, technical accessibility, E-E-A-T, and topical relevance matter for both Google rankings and LLM retrieval. Time spent here has a double return.

Do not deprioritize:

- Core technical SEO (crawlability, site speed, mobile experience)

- E-E-A-T development (author authority, factual accuracy)

- Topical depth and content cluster architecture

Where Strategies Diverge

| Dimension | Traditional SEO Priority | LLMO Priority |

|---|---|---|

| Off-page signals | Backlink count & authority | Co-citation frequency & brand mentions |

| On-page signals | Keyword placement & density | Entity salience & semantic coverage |

| Content structure | Page-level optimization | Passage-level direct-answer blocks |

| Technical signals | Core Web Vitals, crawl budget | Schema markup, AI bot accessibility |

| Success metric | SERP position | AI citation frequency |

The Recommended Resource Allocation Framework

Where you should focus depends on your current maturity level:

Early Stage (Traditional SEO foundation not yet solid): → 80% traditional SEO / 20% LLMO foundations (schema + entity clarity)

Mid Stage (Ranking well on Google, zero AI visibility): → 50% traditional SEO / 50% LLMO (content restructuring, authority building, cluster development)

Advanced Stage (Strong Google presence, building AI-first visibility): → 30% traditional SEO maintenance / 70% LLMO (co-citation campaigns, passage optimization, scorecard audits)

Conclusion

LLM retrieval is not a future consideration. It is an active channel that is already influencing how a significant portion of your target audience discovers information.

The seven factors – topical authority, entity salience, semantic relevance, structured data, E-E-A-T, co-citation signals, and content freshness are your optimization framework. Each one maps to a specific stage in the retrieval pipeline. Each one is actionable today.

Start with the LLMO Readiness Scorecard. Identify your weakest factor. Fix that first.

For a deeper breakdown of each cluster including technical LLMO auditing, entity optimization, and co-citation strategy explore the full LLMO resource library at Khalid SEO. Every guide in that library is built on the same strategic framework applied here.

Optimization doesn’t stop at the SERP anymore.

FAQ

What are the most important ranking factors for LLM retrieval?

The most critical factors are topical authority, entity salience, semantic relevance, structured data, E-E-A-T signals, co-citation mentions, and content freshness — each influencing a different stage of the RAG retrieval pipeline.

These factors differ fundamentally from traditional Google ranking signals. LLMs don’t evaluate pages by backlink count or keyword frequency. They evaluate passages by semantic precision, factual grounding, and source credibility. The most impactful single change most sites can make is restructuring content into clear, direct-answer passages with explicit entity definitions and FAQPage schema markup.

How is LLM retrieval different from traditional Google search ranking?

Traditional SEO ranks full pages by backlinks and keywords. LLM retrieval selects specific passages using embedding similarity, semantic matching, and entity authority — the unit of retrieval is a passage, not a URL.

This distinction means a page that ranks #1 on Google can score zero retrievals from AI systems. Optimizing for LLM retrieval requires passage-level formatting, semantic depth, and structured data — not just on-page keyword placement. The two systems reward different content architectures.

Does E-E-A-T affect how LLMs retrieve and cite content?

Yes. E-E-A-T is a direct LLM retrieval signal. AI systems are trained to favor expert-authored, credibly sourced content and apply authority filters when selecting which passages to cite in generated answers.

LLMs encode patterns from training data that reflect real-world credibility — author credentials, institutional associations, citation frequency, and factual consistency. Implementing author schema, maintaining consistent bylines across publications, and earning references from established sources directly improves how LLMs evaluate your content’s credibility during the citation selection stage.

How can I optimize my content to appear in AI-generated answers?

To appear in AI answers: build topical authority through content clusters, define entities clearly, implement FAQPage and Article schema, earn co-citations from authoritative sources, and structure content with 40–60 word direct-answer passages at each H3.

The single fastest win for most sites is formatting. Adding explicit direct-answer blocks beneath relevant headings — structured to stand alone without surrounding context — dramatically improves passage retrievability. Pair that with FAQPage schema and author markup, and you address both the relevance and credibility filters simultaneously.

What role does structured data play in LLM retrieval?

Schema markup removes ambiguity. It explicitly tells AI systems what your content is, who created it, and what questions it answers — reducing inference errors and increasing retrieval confidence across all LLM systems.

Without structured data, retrieval systems must infer your content’s meaning from context alone. With FAQPage, Article, and Person schema, you provide machine-readable signals that directly inform entity classification, author credibility assessment, and question-answer extraction. FAQPage schema in particular has a measurable impact on the probability that your explicit Q&A blocks are extracted and cited verbatim.

Published by Khalid SEO — LLMO Strategy & AI Search Optimization

AI Search Specialist (AEO, GEO) | Helping brands get found, answered, and cited | Win on Google & ChatGPT.

I help businesses show up where their customers are actually looking.

For a long time, that just meant chasing rankings on Google. Today, it means something entirely different: people are asking AI for answers.

My job is simple. I bridge the gap between traditional SEO and the new world of AI search. I make sure that when someone asks ChatGPT, Google’s AI, or any other engine a question in your industry, your brand is the definitive answer they get.

No magic tricks or complicated buzzwords – just clean, modern search strategies that get you visibility and highly qualified leads.

Want to see how we can make your brand the trusted answer?

👉 Let’s connect, or check out my exact strategies at khalidseo.com.