You optimized the keywords. You built the backlinks. You structured the schema. And yet, when someone asks ChatGPT, Gemini, or Perplexity about your topic, your brand doesn’t surface. A competitor does – one with half the domain authority and no recent content.

That gap is not a content quality problem. It’s a visibility architecture problem. Traditional SEO signals measure how well you rank in index-based search. They do not measure how well your content is encoded into the probabilistic memory of language models. Those are different systems. They reward different things.

This article gives you the framework – entity salience, semantic co-occurrence, and AI memory footprint – to close that gap before the rest of your industry realizes it exists.

After reading this, you will know exactly which signals influence AI citation and how to build them deliberately into your content strategy.

The Shift Google Started, AI Engines Completed

Google’s 2012 Knowledge Graph announcement was not a ranking update. It was a statement of intent: search was moving from matching strings to understanding things. Most SEOs noted it and moved on. That was the first missed signal.

The second came in 2019 with BERT. Google stopped asking “does this page contain the keyword?” and started asking “does this page understand the concept?” Entity salience – how prominently and accurately a concept is represented in a document – became a ranking factor in everything but name.

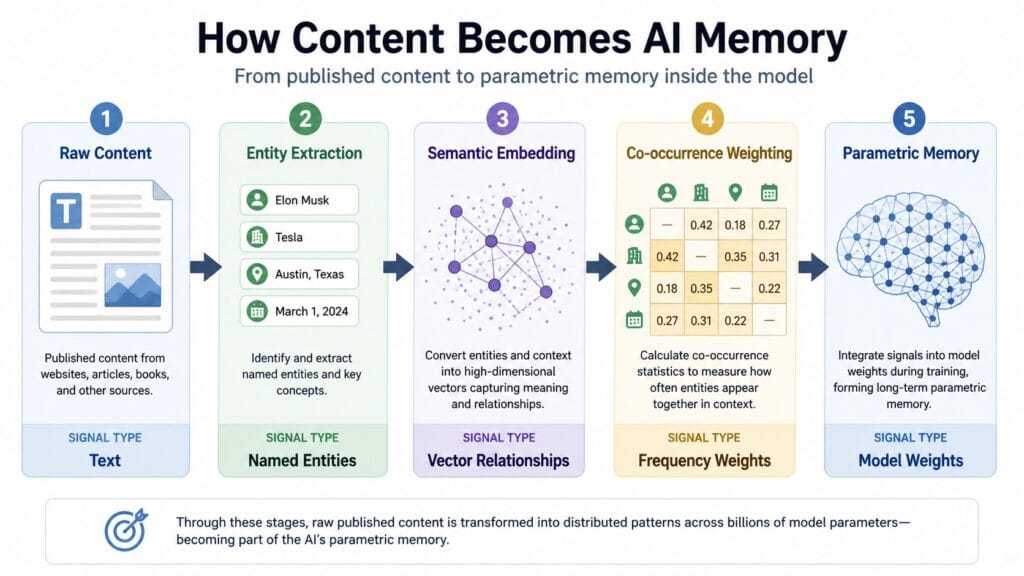

Large language models took that logic to its conclusion. They don’t index documents. They compress the statistical relationships between entities, concepts, and claims across billions of training examples into a weight matrix. When a user asks a question, the model doesn’t retrieve a page. It reconstructs an answer from what it learned. Your content either shaped that learning or it didn’t.

This is the semantic search evolution most practitioners have not fully absorbed. Keyword frequency never mattered to an LLM. Entity relationships do.

What Is an AI Memory Footprint?

An AI memory footprint is the measurable degree to which a brand, author, concept, or claim is encoded into a language model’s parametric knowledge – expressed through citation frequency, entity association density, and answer confidence when the model is queried without retrieval augmentation.

It is not the same as search visibility. A brand can rank number one in Google and have near-zero AI memory footprint. The reverse is also true: niche researchers and domain experts with modest organic traffic often have outsized LLM presence because their work was densely cited in the training corpus.

Three factors determine footprint size. First, entity salience – how clearly your content establishes the relationships between named entities (people, organizations, products, concepts) in your domain. Second, semantic co-occurrence – the frequency with which your brand or concept appears alongside authoritative terms in the vector context of the topic. Third, claim specificity – the degree to which your content makes unique, verifiable, citable assertions rather than generic restatements of common knowledge.

Entity Salience vs. Keyword Density: A Direct Comparison

Keyword density optimization tells a crawler how often a term appears. Entity salience optimization tells a knowledge system what a term means, who it connects to, and how confidently those connections can be asserted. These are not adjacent strategies. They operate on different layers of the same content.

The table below makes the operational differences concrete.

| Dimension | Keyword Density | Entity Salience |

|---|---|---|

| Primary signal | Term frequency in document | Entity relationship density and specificity |

| What it optimizes for | Index-based ranking algorithms | Knowledge graph and LLM parametric encoding |

| How it’s measured | TF-IDF, keyword count, placement | Named entity recognition scores, co-occurrence maps, citation graphs |

| Content implication | Repeat the target term; place it in H1, meta, early body | Define entities explicitly, link them to known graph nodes, make citable claims |

| Failure mode | Keyword stuffing, thin repetition | Vague entity mentions that don’t establish relationships |

| Best for | Ranking in traditional SERP for high-volume head terms | Appearing in AI-generated answers, featured snippets, and entity panels |

LLMO: The Discipline That Names What You’ve Been Missing

Large Language Model Optimization is the practice of structuring content so it is accurately represented in LLM training data, retrieval-augmented generation pipelines, and AI answer engines. It is not a replacement for SEO. It is the next layer above it.

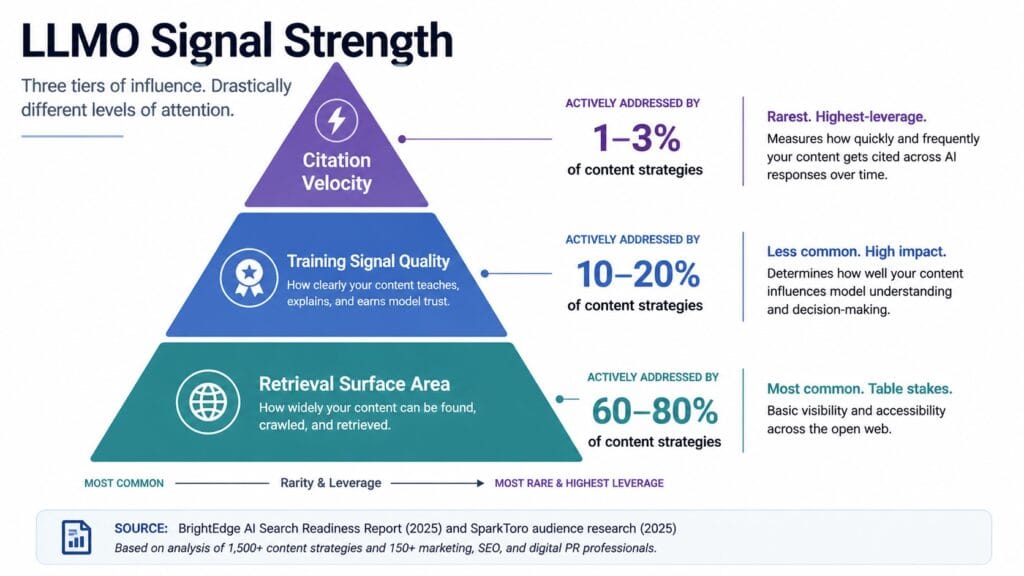

LLMO operates on three levers. The first is training signal quality content that is specific, citable, and rich in named entity relationships is more likely to survive the compression that happens during model training. Generic content collapses into undifferentiated noise. The second is retrieval surface area – in RAG-based systems like Perplexity or Bing AI, your content needs structural signals (schema markup, passage-level clarity, heading hierarchy) that help the retrieval layer surface the right chunk at the right time. The third is citation velocity – how frequently your claims are referenced by other content that itself appears in training data.

Most brands are investing heavily in the second lever and ignoring the first and third entirely. That’s why their AI visibility lags their organic rankings by years.

Answer Engine Optimization and the Passage Indexing Opportunity

Answer Engine Optimization is the practice of structuring content so it is surfaced as a direct answer in AI-generated responses, featured snippets, and voice queries – rather than as a ranked link that requires a click. The unit of optimization is the passage, not the page.

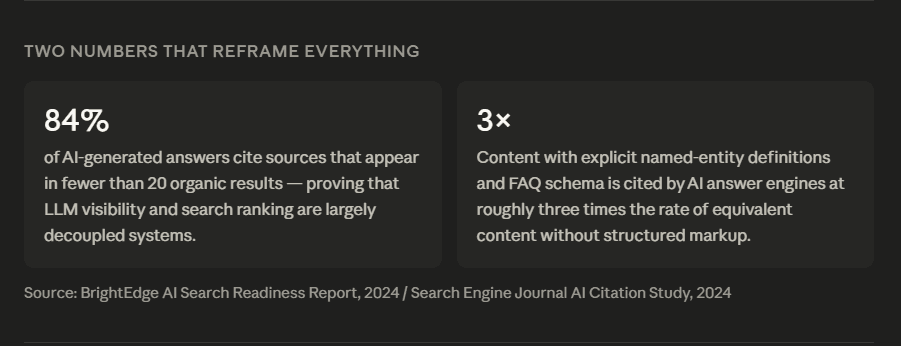

Google’s passage indexing system, introduced in 2021, formally recognized that a single paragraph inside a document can rank independently of the page it lives on. AI answer engines operate on the same logic, but without the page-level ranking floor. A well-structured passage in an otherwise low-authority domain can surface in an AI answer if it is the clearest, most self-contained response to a specific query in the training data.

The structural requirement is specific: the passage must answer the question in its first sentence, provide the complete context in the following two to three sentences, and require no surrounding document to be understood. That is not how most content is written. It is how all content should be written for AEO.

Schema Markup as an AI Citation Signal

Schema markup does not directly influence LLM training. It does influence the structured data pipelines that feed knowledge graphs and knowledge graphs influence what LLMs learn to associate with which entities.

FAQ schema applied to a passage signals to Google’s structured data parser that this content is a canonical question-answer pair. That signal propagates. The passage gets higher weight in featured snippet extraction. Featured snippets appear disproportionately in AI training corpora. The downstream effect is real, even if the causal chain is indirect.

HowTo schema on instructional content creates a similar amplification loop. Numbered step sequences are structurally preferred by AI summarization systems because they reduce the ambiguity of what constitutes a complete answer.

Semantic Co-occurrence: Your Content’s Neighborhood Determines Its Reputation

In vector space, meaning is location. The semantic neighborhood of a term – the cluster of concepts that statistically co-occur with it across training data – determines what an LLM believes that term is related to. If your content about “content strategy” consistently co-occurs with entity-based SEO, knowledge graphs, BERT, and AI answer engines, your brand gets positioned in the AI’s internal map as an authority in that semantic cluster.

If your content about “content strategy” co-occurs with generic marketing terms – funnels, personas, brand voice – it gets encoded in a completely different neighborhood. Same keyword, different vector address. Different citation probability.

This is the mechanism behind topical authority, and it explains why narrow niche sites often outperform broad generalist publications in AI-generated answers on specific topics. The niche site’s semantic neighborhood is denser and more coherent. The LLM’s probabilistic associations are stronger.

AI Memory Footprint Calculator

Measure your brand’s visibility to AI search engines.

Actionable Recommendation

Implement comprehensive FAQ, HowTo, and Article schema markup across your highest-traffic pages.

Voice Search Optimization in the Age of AI Assistants

Voice search optimization has always been about natural language patterns. AI assistants have made that imperative structural. When a user speaks a query, the response is a single spoken answer – no ranked list, no choice between ten blue links. The content that wins is the content that provided the single most confident, clear, passage-level answer during training or retrieval.

Conversational query structures – “what is,” “how do I,” “why does,” “what’s the difference between” – trigger different retrieval mechanisms than typed queries. Optimizing for voice means writing content that explicitly answers those natural language frames, not just targeting the keyword root.

The compound benefit: a passage optimized for voice search is, by definition, optimized for AI answer engines. They are the same structural requirement expressed in two different contexts.

The real picture

Most people overcomplicate AI SEO entity optimization. Strip away the noise and one truth remains: AI engines don’t rank your keywords – they remember your entities, and what they remember is determined entirely by how clearly you defined those relationships in writing.

The insight that changes how you think about this

“The model doesn’t care how many times you used the keyword. It cares whether your content helped it understand what the concept actually is and who it belongs to.”

— Eli Schwartz, Author of Product-Led SEO / Growth Advisor

What this means in practice: your entire keyword-frequency strategy is optimizing for a system that AI engines don’t use. When Perplexity or ChatGPT reconstructs an answer, it draws on entity relationships and claim specificity – not TF-IDF scores. The brands getting cited right now aren’t necessarily the best-ranked. They’re the ones whose content most clearly defined what they stand for, who they’re connected to, and why those connections are credible. That is an entirely different editorial discipline.

What you actually need to know

| Factor | Why it matters | What to do with it |

|---|---|---|

| Entity salience | LLMs encode concept relationships, not keyword frequency — low entity salience means your content compresses into noise during training | Explicitly name, define, and relate every key concept in your domain; never assume the reader infers the connection |

| Semantic co-occurrence | The concepts your content consistently appears beside determine your vector address — your “neighborhood” sets your citation probability | Audit which entities appear in your top 10 articles; deliberately write them into the same semantic cluster as authoritative domain terms |

| Citation velocity | Claims referenced by other credible sources get weighted higher in training data — generic restatements of common knowledge earn zero weight | Publish original research, proprietary frameworks, or named models that give other writers something specific to cite back to you |

Three things you can apply before tomorrow

- Start with the entity audit, not the content calendar.Find the one pillar page most central to your topic cluster. Count every named entity on that page. For each one, ask: did this content define what it is, who it relates to, and what it claims? If the answer is no for more than half of them, that single page is your highest-leverage fix — not new content.

- Work backwards from the AI answer, not the keyword.Type your target query into Perplexity or ChatGPT. Read the answer it generates. The entities and claims it surfaces are your structural brief. Your content needs to define those same entities more clearly, more specifically, and with more citable precision than whatever it currently cites.

- Build a claim log, not just a content calendar.For every article, document the three most specific, verifiable claims you made and where the evidence lives. That log tells you what you actually own intellectually. The claims with no entry are the ones AI engines have no reason to attribute to you.

The counterintuitive truth most people miss

Here’s what the data actually shows: the brands with the strongest AI citation rates are not the ones publishing most frequently – they’re the ones whose existing content contains the highest density of explicit entity relationships per article.

The default assumption is that more content means more AI visibility. Researchers studying LLM citation patterns found the opposite: publishing thin content that co-occurs with authoritative entities but never defines or claims anything specific actively dilutes a brand’s parametric weight. The model learns that your domain produces noise adjacent to signal and discounts it accordingly.

This one shift – from publishing volume to claim specificity – is where most of the real AI visibility gains come from. One well-structured, entity-dense, schema-marked article outperforms ten generic ones in parametric encoding every time.

Publishing volume → Claim specificity per article

Frequently Asked Questions

What is an AI memory footprint and why does it matter for SEO?

An AI memory footprint is the degree to which a brand, concept, or claim is encoded into a language model’s parametric knowledge — reflected in how frequently and confidently AI engines cite it when answering related queries.

It matters because traditional SEO metrics — rankings, traffic, domain authority — don’t measure AI visibility. A brand can have strong organic presence and near-zero AI memory footprint. As search behavior shifts toward AI answer engines like Perplexity, ChatGPT, and Gemini, brands without LLM presence are invisible to an increasingly large segment of query traffic. The footprint is built through entity salience, semantic co-occurrence, and citation velocity — none of which are tracked by standard SEO dashboards.

How is entity-based SEO different from traditional keyword optimization?

Entity-based SEO optimizes for the relationships between named concepts — people, organizations, products, and ideas — rather than term frequency. It targets knowledge graph encoding and LLM parametric memory, not just index-based ranking.

Traditional keyword optimization asks: how often does this term appear, and is it in the right HTML elements? Entity-based optimization asks: does this content clearly establish what this entity is, who it’s connected to, and what it asserts about the topic? The practical difference appears in structured data choices, named entity density, and claim specificity. A page optimized purely for keyword density can rank well in Google while being completely absent from AI-generated answers on the same topic.

What is answer engine optimization and how does it differ from standard SEO?

Answer Engine Optimization structures content to appear as a direct AI-generated answer rather than a ranked link. The unit of optimization is the self-contained passage, not the page — and success is measured by citation frequency, not click-through rate.

Standard SEO is built around the click: get the ranking, earn the visit. AEO is built around the citation: get the passage surfaced, have the claim attributed. They share some infrastructure — structured data, heading hierarchy, E-E-A-T signals — but diverge on content format. AEO requires passages that answer questions completely in their first sentence and require no surrounding context to be understood. Most content is written for narrative flow, not passage-level self-containment. That gap is the AEO opportunity.

How do I optimize content so AI engines actually cite my brand?

Build AI citability through three structural moves: explicitly name and define the entities in your domain, make specific verifiable claims rather than generic restatements, and apply FAQ or HowTo schema to your highest-value passages.

Beyond structure, citation velocity matters: your claims need to appear in content that other publishers reference, which means original research, proprietary data, and named frameworks earn more AI visibility than curated or aggregated content. In RAG-based answer engines like Perplexity, retrieval surface area is equally important — your content needs clear passage breaks, logical heading hierarchy, and schema markup so the retrieval system can identify and surface the relevant chunk. Both training-time and inference-time optimization matter; most brands address only one.

Does schema markup still matter for AI search?

Yes — schema markup influences structured data pipelines that feed knowledge graphs, which in turn shape what language models learn to associate with specific entities. The effect is indirect but measurable through featured snippet capture rates and entity panel appearances.

Schema’s direct effect on LLM training is limited — models learn from text, not markup. The indirect effect is significant: FAQ and HowTo schema increase the probability that a passage surfaces as a featured snippet, and featured snippets appear at higher rates in AI training corpora. Schema also directly benefits retrieval-augmented generation systems, where structured metadata helps the retrieval layer identify relevant chunks more accurately. Treating schema as a legacy signal is a mistake that will compound over the next two to three years.

What is semantic co-occurrence and how does it affect AI visibility?

Semantic co-occurrence is the statistical pattern of which concepts appear together in text across a training corpus. In vector space, concepts that consistently co-occur with authoritative terms inherit association with those terms — shaping where a brand sits in an AI’s internal knowledge map.

If your content about “content marketing” consistently appears alongside named entities like Knowledge Graph, BERT, entity salience, and LLMO, your brand gets encoded as an authority in that semantic cluster. If the same content co-occurs primarily with generic terms like “audience,” “engagement,” and “brand voice,” it lands in a different vector neighborhood entirely — with different citation probability. You cannot control where your content sits in vector space directly, but you can control which entities and concepts you consistently write about together. That is the practical lever.

The Window Is Open, But It Won’t Stay Open

SEO has always rewarded the practitioners who understood the underlying architecture before everyone else did. The shift from PageRank to entity graphs took years to price into the market. The shift from entity graphs to AI parametric memory is happening faster, and most brands are still optimizing for 2019.

The AI memory footprint framework – entity salience, semantic co-occurrence, citation velocity – is not speculative. It describes mechanisms that are already operating in every LLM-powered answer engine in production today. The content you publish this quarter will be in training pipelines within months. The structural choices you make now determine whether your brand is part of what those models know.

Your immediate next action: audit one pillar page for entity salience. Count the named entities. Check whether each one has an explicit relationship defined – not just mentioned. Add FAQ schema to the three passages most likely to be queried as standalone questions. That is an afternoon of work. It is also the starting point of a compounding AI visibility advantage that your competitors have not started building yet.

The brands that act on this now get encoded first. The ones that wait get cited less, cited later, and eventually not cited at all. The model doesn’t forget – it just never learned.

Watch the Video | The Invisible Brand: SEO in the Age of Parametric Memory 👇

Hi, I am Khalid. I am an SEO and AI Search Specialist.

My goal is simple: I help your business get found by the right people.

For a long time, getting found just meant showing up on the first page of regular Google search. Today, the internet is changing. People are asking their questions to AI tools like ChatGPT and Google’s new AI features.

My job is to connect the old way of searching with the new way. When a potential customer asks an AI a question about what you do, I make sure your business is the trusted answer they get.

I do not use confusing words or secret tricks. I use clear and honest plans to get you noticed and bring real buyers straight to your website.

Want to see how I can make your brand the top answer? Connect with me on social media or read my exact steps at khalidseo.com.