You rank on Google. Your traffic is fine. But when someone asks ChatGPT or Perplexity about your topic – you don’t exist. Here’s the diagnostic nobody’s running.

You spent months building authority in your space. Your pages rank. Some of them rank well. But when a potential customer opens ChatGPT and asks about the exact problem your business solves – your brand isn’t in the answer.

Someone else is. A smaller site. A site with fewer backlinks. A site you’ve never worried about in Google Search Console.

This isn’t a fluke. And it isn’t about your technical SEO. It’s about a filter most content teams haven’t been told exists – one that AI models apply before they decide who gets cited and who gets quietly ignored.

This article isn’t another guide on “how to optimise for AI.” It’s a diagnosis. It names the specific patterns that trigger citation exclusion, explains the mechanism behind them, and gives you a framework to audit your own content against it.

The numbers behind the gap

First, some data – because the scale of this shift is moving faster than most marketing teams have registered.

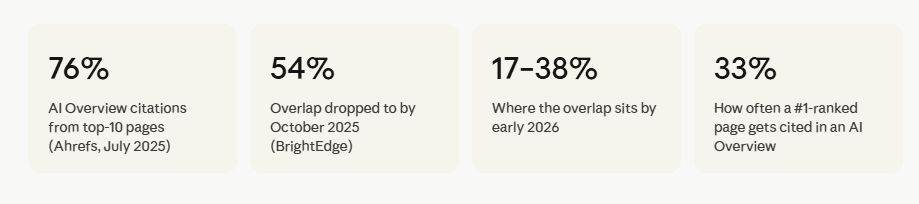

In mid-2025, about three-quarters of pages cited in Google’s AI Overviews also ranked in the top 10 organic results. By early 2026, that figure had dropped to somewhere between 17% and 38%, depending on the study.

That’s not a gradual drift. That’s a structural break between two systems that used to move together.

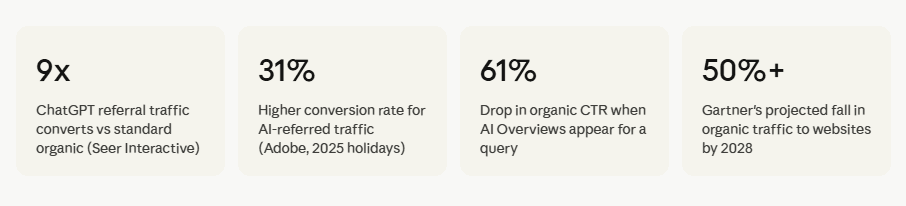

Meanwhile, the stakes are rising. Organic click-through rates for queries that trigger AI Overviews dropped 61% from 1.76% to 0.61% between June 2024 and September 2025, according to Seer Interactive’s analysis of 25 million impressions. Sixty percent of searches now end without a single click to any website.

If you’re not cited, you’re not in the conversation. And increasingly, the conversation is the whole game.

Why ranking and being cited are completely different problems

Short answer: Google ranks pages. AI models construct answers. Those are different tasks that reward different signals and the SEO playbook built around the first task actively undermines performance on the second.

| Signal type | What Google rewards | What AI models trust |

|---|---|---|

| Authority | Backlinks, domain rating, link profile | Brand mentions across independent sources |

| Content quality | Keyword relevance, length, engagement | Specificity, original data, citable claims |

| Structure | Headers, internal links, technical health | Answer-first layout, extractable passages |

| Freshness | Publication date, content updates | Recency signals, dated statistics |

| Trust signals | E-A-T, author credentials | Third-party validation, entity density, named experts |

Google’s algorithm learned to treat backlinks as a proxy for quality. That correlation is mostly valid and also deeply gameable. Two decades of SEO has produced a large category of content that ranks well precisely because it’s optimised for those proxies, not because it’s actually useful.

AI models weren’t trained on ranking signals. They were trained on text. They developed an implicit sense of what trustworthy, citable content looks like and that sense is different from what Google measures.

The data confirms it. Ahrefs found that brand mentions are three times more predictive of AI visibility than backlinks a correlation of 0.664 versus 0.218. The signal that built an entire industry carries a fraction of the weight in AI citation decisions.

The mechanism: why a #1 ranking still isn’t enough

There’s a specific technical reason the ranking-to-citation link has weakened and it explains a lot about why some content gets surfaced and other content doesn’t.

When someone searches and an AI Overview is triggered, the system doesn’t evaluate a single query against your single best-matching page. It expands the original query into multiple related sub-queries simultaneously. Google calls this query fan-out.

Each sub-query retrieves the most relevant content chunk for that specific angle. The pages that appear consistently across the most sub-queries are the ones that get cited in the final answer.

A page optimised for one keyword – even if it’s the #1 result for that keyword may only satisfy one of the five or six sub-queries the AI is evaluating. A page that covers a topic from multiple angles, addresses related questions, and has genuine depth across a subject will show up in more sub-query retrievals.

That’s why narrow keyword optimisation fails the fan-out test. And why topical depth beats keyword precision in AI search.

watch the youtube video here: 👇

The four content patterns AI models consistently ignore

Citation exclusion isn’t random. These four patterns show up repeatedly in pages that rank well but never get cited and they’re all diagnosable before you publish.

Pattern 1: over-optimised affiliate and commercial content

Affiliate content has a recognisable shape. Keyword in the first sentence. Comparison table. A “best pick” box. Affiliate links in the CTA. AI models have ingested enough of this format to recognise the structure and the structure signals that the goal is conversion, not clarity.

This doesn’t mean commercial content is automatically excluded. It means content where the entire architecture serves the sale rather than the answer reads as low-trust to a retrieval system. Intent shows through structure.

Pattern 2: SEO-first writing that buries the answer

CXL’s research into AI Overview citation patterns found that 55% of AI citations come from the first 30% of a page. Only 21% come from the bottom 40%.

AI retrieval systems don’t read pages the way humans do. They parse in chunks, looking for the extractable answer early. A long intro, a brand story, a “why this matters” warm-up – all invisible to the extraction layer. If your core answer isn’t surfaced in the first few hundred words, the model may skip the page entirely.

Pattern 3: hedged, non-committal writing

“It depends.” “Results may vary.” “Some experts believe X, while others argue Y.”

Sometimes hedging is honest. But content that never takes a position gives AI models nothing to attribute. There’s no claim to cite. No insight to surface. A model synthesising consensus can produce vague hedged content on its own – it only needs to cite you if you offer something more specific.

The Princeton GEO study quantified this directly: keyword stuffing decreased AI visibility by 10%, while declarative statements backed by statistics improved it by up to 40%. The writing style that games keyword rankings actively hurts citation probability.

Pattern 4: no third-party corroboration

AI models don’t evaluate your content in isolation. They look for evidence that your brand and claims are validated across independent sources – news coverage, industry forums, academic references, community discussion.

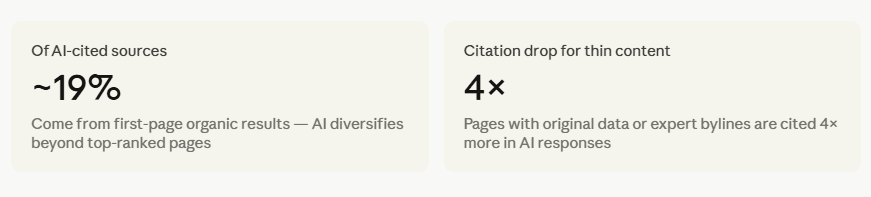

An Ahrefs study found almost no relationship between the number of pages on a site and AI citation frequency. Content volume doesn’t move the needle. External mention density does. Reddit’s growth to 1.4 billion monthly visits by April 2025 was driven in part by its explosive rise in AI citations – because authentic, experience-based community discussion reads as third-party validation that polished marketing copy can’t replicate.

The AI Trust Filter framework

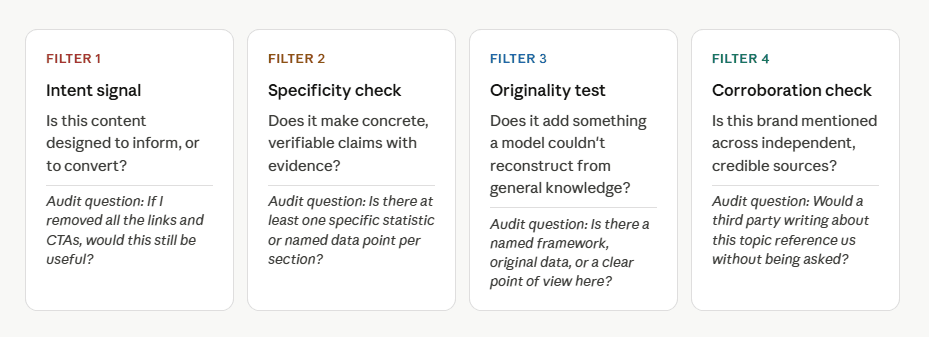

Think of it as four questions the model implicitly asks before deciding whether to cite a source. Not an official system but a pattern that emerges consistently from what the research shows about citation selection.

Content that passes all four filters gets cited. Content that fails one or more gets synthesised away – meaning the model uses the information without attributing it, or skips the page entirely.

What gets cited instead and why

The positive pattern is the mirror image of the exclusion patterns above. Research from Princeton University, Georgia Tech, and the Allen Institute for AI established the GEO benchmark framework across 10,000 queries. Their findings on what improves AI citation visibility:

- Adding statistics: up to +40% citation visibility improvement

- Including quotations from named sources: up to +37%

- Citing credible external sources within the content: up to +30%

- Keyword stuffing (for comparison): −10% – it actively hurts

Separate analysis of 15,847 AI Overview results found that semantic completeness – covering a topic thoroughly and in a structured way is the single strongest citation predictor, with a correlation of 0.87. Content scoring above 8.5 out of 10 on semantic completeness is 4.2 times more likely to be cited.

Pages with 15 or more recognised named entities show 4.8 times higher citation probability. This isn’t about cramming in brand names. It’s about writing with the depth and specificity that naturally produces entity-rich content – real people, real organisations, real studies, real data.

“AI systems don’t cite content that ranks. They cite content they can extract, attribute, and trust and those are three different tests, all of which your page has to pass.”

The business case: what citation exclusion is actually costing you

This is where the SEO conversation becomes a revenue conversation.

Seer Interactive found that ChatGPT referral traffic converts at 15.9% – compared to 1.76% for standard Google organic search. That’s a 9x difference in conversion rate from the same audience. Adobe’s analysis of the 2025 holiday season found that generative AI traffic converted 31% higher and drove 32% more revenue per visit than non-AI traffic sources.

The pattern is clear. Traffic from AI platforms is still a small fraction of total web traffic but it converts at a significantly higher rate, because visitors arrive already informed and further along in their decision. If your brand isn’t cited, you’re not just losing visibility. You’re losing your highest-converting traffic segment.

Note on sector variation: In YMYL categories – healthcare, insurance, education – the overlap between AI citations and top-10 organic rankings is still 68–75%. AI systems apply stricter sourcing standards here, and domain authority still carries significant weight. The citation gap is widest in informational and general knowledge content.

A practical audit: how to find out if you’re being excluded

Before you restructure your content strategy, confirm the problem. Here’s a five-step audit you can run this week:

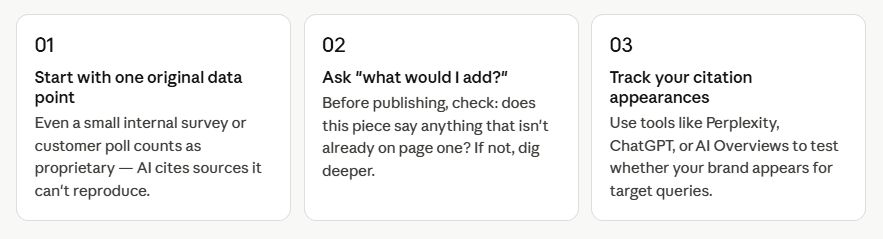

- Manual sampling. Query ChatGPT, Perplexity, and Google AI Mode with your top 10 target questions. Document every time you’re cited versus every time a competitor is cited instead.

- Set up AI referral tracking in GA4. ChatGPT referral traffic has been trackable via UTM parameters since June 2025. Create a segment for all sessions sourced from AI platforms and track it as a separate channel.

- Run the AI Trust Filter audit. Apply the four filters above to your five highest-traffic pages. Score each one: Does it pass the intent signal check? Is there a specific, verifiable claim in the first 30% of the page? Does it offer something a model couldn’t reconstruct from consensus?

- Audit answer placement. Check whether your core answer to the page’s target question appears within the first 200–300 words. If it doesn’t, that’s your first fix.

- Check your third-party footprint. Search your brand name in Reddit, industry forums, and news sites. If you’re not being discussed independently, you have a corroboration gap – not a content quality gap.

The question has changed

For most of the last decade, content strategy meant search strategy. Rank for the right queries, capture the traffic, convert. That loop still works for traffic.

But AI answers are increasingly where questions get resolved, recommendations get formed, and decisions start. Those answers don’t evaluate your domain rating or your keyword density. They evaluate whether your content is specific enough to cite, original enough to attribute, and trusted enough to surface.

The sites being passed over aren’t all doing bad work. Many of them rank because they played the SEO game well. The problem is that the game changed, and citation avoidance is the first signal that the old playbook has limits that are becoming expensive to ignore.

The question isn’t whether you rank. It’s whether, when an AI constructs an answer about your topic, your content is the kind it trusts enough to cite.

The invisible ranking factor

How AI models decide who not to cite and what you can do about it.

“AI models are not search engines – they’re editorial curators. They select for depth, not just relevance.”

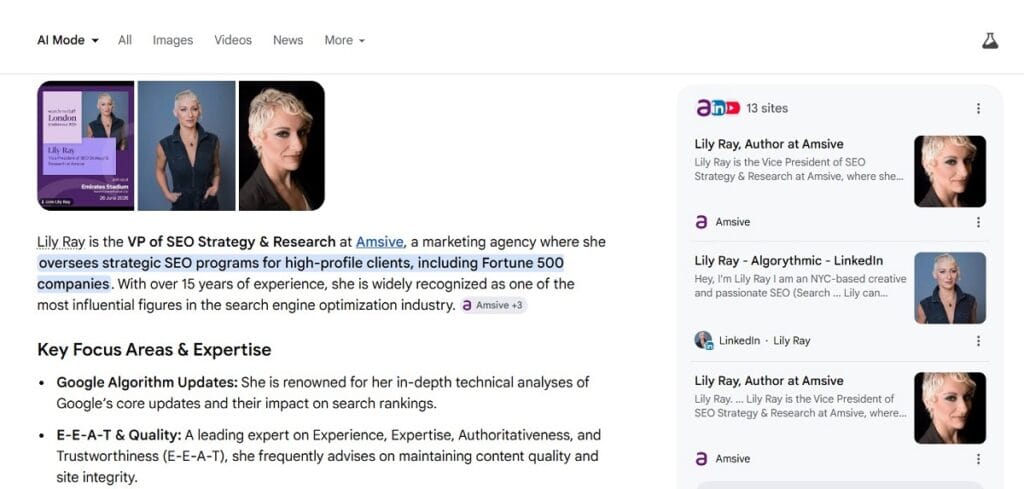

— Lily Ray, VP of SEO Research & Communications, Amsive

In plain terms: instead of chasing keyword density, focus on the one thing AI can’t fake – genuine editorial judgment and original insight.

Key ranking signals at a glance

| Factor | Why it matters to AI | How it helps you |

|---|---|---|

| Originality | AI skips content that closely mirrors other pages – it adds no new information to its output | Proprietary data, first-hand surveys, or unique angles are nearly impossible for AI to replace |

| Expertise signals | Named authors, credentials, and institutional affiliations boost trust weighting in training and retrieval | A clear byline with verifiable credentials can lift citation probability substantially |

| Editorial judgment | AI models favor pages that take a clear stance or synthesize conflicting views – not just summaries | Opinionated, well-reasoned analysis gets cited because it resolves ambiguity the AI cannot resolve alone |

Simple moves you can make today

Surprising fact

Did you know that publishing less content, but with more depth, can actually increase AI citation rates? Most creators assume volume wins but AI models are designed to filter out noise. One highly original, expert-backed piece consistently outperforms ten thin summaries in AI-generated answers.

Frequently asked questions

Why does my website rank on Google but not appear in AI answers?

Google ranks pages using backlinks, keywords, and engagement signals. AI models cite sources based on specificity, content extractability, and third-party validation. These are fundamentally different criteria – a page can hold the #1 position and still fail all four AI trust filters.

The two systems are now measuring different things. Google’s algorithm has learned to treat links and engagement as proxies for quality. AI retrieval systems were trained on text and learned to recognise what trustworthy, citable writing looks like and those patterns don’t always correlate with high-ranking pages. By early 2026, the overlap between top-10 Google rankings and AI Overview citations had fallen to between 17% and 38%, down from 76% in mid-2025.

What content does AI refuse to cite?

AI models consistently ignore: over-optimised affiliate content where commercial intent overrides clarity; SEO-first writing that buries the answer; hedged, non-committal writing with no specific claim; and content with no independent third-party corroboration.

These four patterns trigger what can be called citation avoidance – the model either synthesises the information without attribution or skips the page entirely. The Princeton GEO study found that keyword stuffing (a standard SEO practice) actually decreased AI citation visibility by 10%, while adding statistics and specific claims improved it by up to 40%.

How do I get my website cited by ChatGPT or Google AI Overviews?

Lead with your direct answer in the first 200 words. Include at least one concrete statistic per section. Make specific, attributable claims. Earn brand mentions across independent sources. Use schema markup to make content machine-readable.

The Princeton GEO study (published at KDD 2024, co-authored by Princeton, Georgia Tech, and the Allen Institute for AI) found that adding statistics improved AI citation visibility by up to 40%, quotations by 37%, and source citations by 30%. Brand mentions across third-party sources are three times more predictive of AI visibility than backlinks, according to Ahrefs research. Structure your content so that every H3 functions as a standalone, extractable passage – not requiring surrounding context to make sense.

What is the difference between SEO and GEO?

SEO targets ranking positions in Google’s link results through backlinks, keywords, and technical signals. GEO (Generative Engine Optimization) targets being cited inside AI-generated answers through content specificity, entity authority, and structural extractability. As of 2026, these are distinct disciplines.

The term GEO was formalised in a 2024 paper by researchers at Princeton University, Georgia Tech, IIT Delhi, and the Allen Institute for AI. Traditional SEO optimises for where your page appears in a list. GEO optimises for whether your content becomes the source an AI system quotes when constructing an answer. The overlap between top SEO performance and top AI citation performance has shrunk significantly – which means a strategy that ignores one of these disciplines is now leaving visible gaps.

Does ranking #1 on Google guarantee AI visibility?

No. A #1 Google ranking translates to an AI Overview citation only about 33% of the time as of early 2026. The overlap between top-10 organic rankings and AI citations has fallen from 76% in mid-2025 to between 17–38% by early 2026.

This is the core problem the article addresses. The assumption that good SEO and good AI visibility are the same exercise was supported by the data as recently as July 2025. By early 2026, two separate studies – from Ahrefs and BrightEdge – put the overlap between 17% and 38%. The query fan-out mechanism helps explain why: AI systems evaluate content against multiple related sub-queries simultaneously, and a page optimised for a single keyword may only satisfy one of them.

Hi, I am Khalid. I am an SEO and AI Search Specialist.

My goal is simple: I help your business get found by the right people.

For a long time, getting found just meant showing up on the first page of regular Google search. Today, the internet is changing. People are asking their questions to AI tools like ChatGPT and Google’s new AI features.

My job is to connect the old way of searching with the new way. When a potential customer asks an AI a question about what you do, I make sure your business is the trusted answer they get.

I do not use confusing words or secret tricks. I use clear and honest plans to get you noticed and bring real buyers straight to your website.

Want to see how I can make your brand the top answer? Connect with me on social media or read my exact steps at khalidseo.com.